Why a Contextual Data Layer Is Required for Trust, Scale, and Production

Jakki Geiger, CMO at Arango in conversation with

Ravi Marwaha, Chief Product & Technology Officer at Arango

TL;DR

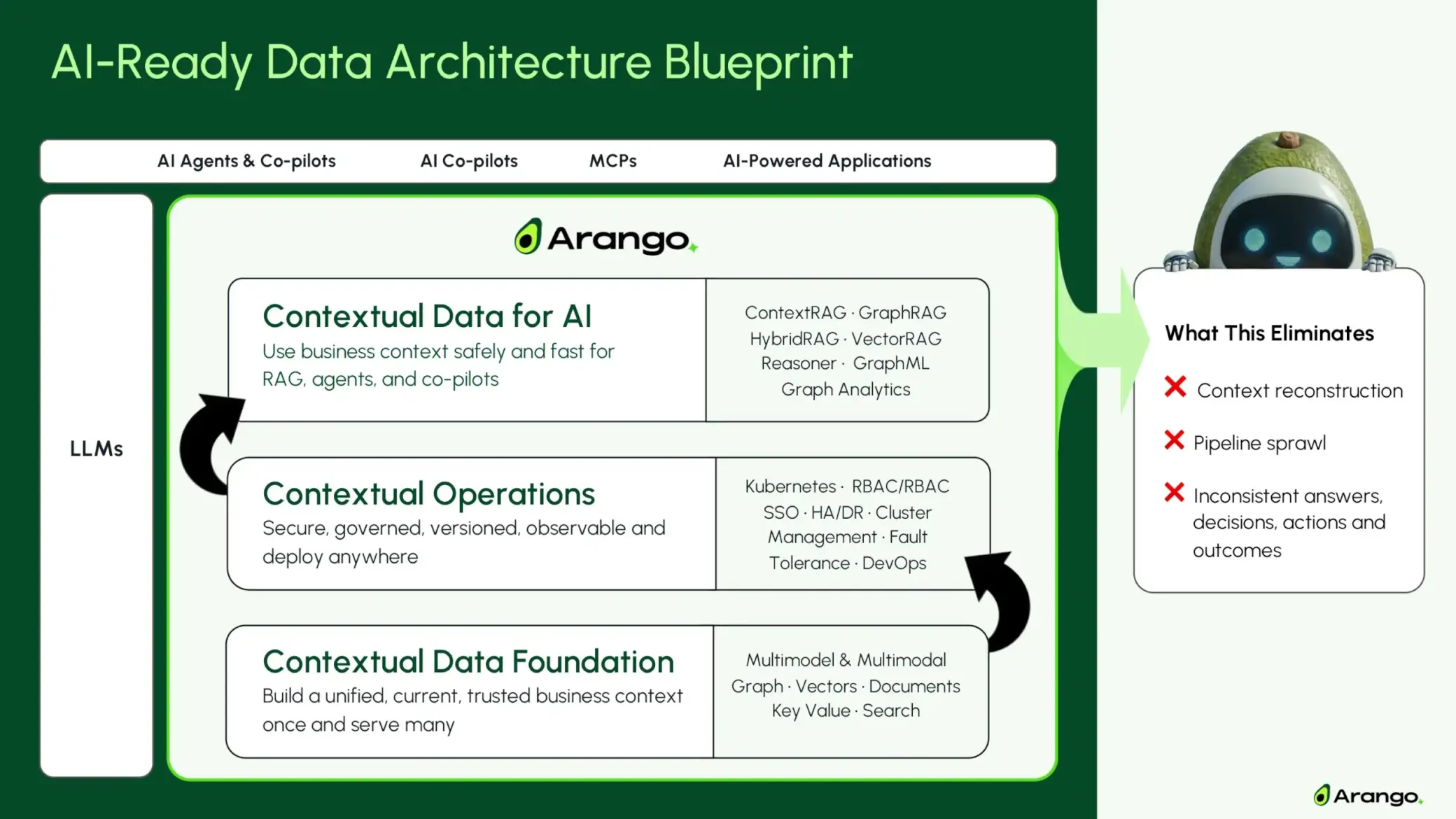

Enterprise AI fails in production when business context is fragmented across systems. When meaning, relationships, time, and trust are managed separately, AI systems are forced to reconstruct context at runtime—an approach that works in demos but breaks at scale. A contextual data layer provides a unified, current, and trusted view of business reality, so every model, agent, and co-pilot operates on the same shared context. Enterprise AI ultimately reflects the data strategy beneath it: manage context once, reuse it everywhere, and AI outcomes compound instead of fragmenting.

Jakki: How do you define a contextual data layer?

Ravi: A contextual data layer is a bridge between enterprise data systems and LLMs.

A contextual data layer connects fragmented enterprise data to LLMs, providing the shared business context required for agents, co-pilots, and chatbots.

Its job is to make data truly AI-ready by ensuring AI systems can always answer four fundamental questions: what things mean, how they relate, when they were true, and where they came from.

That’s what AI-ready data actually means in practice. Business meaning, relationships, and state are preserved consistently, so AI doesn’t have to reconstruct context at runtime. Instead, every model, agent, or application starts from the same shared understanding of the business.

This layer gives AI a unified, current, and trusted view of business context — turning fragmented, out-of-sync data from databases, data platforms, and operational systems into a single source of context that AI can reason over safely in production.

It doesn’t replace graphs, vectors, or systems of record.

It unifies them, so every AI system sees the same version of reality.

Specifically, a contextual data layer manages and delivers context across:

- Meaning — shared business semantics and definitions

- Relationships — how customers, products, incidents, policies, and systems connect

- Time — what was true when, including change history and current state

- Provenance and trust — where information came from, how it evolved, and who can use it

- Multimodal signals — text, code, logs, and media connected to the same business context

- AI-ready delivery — retrieval, ranking, and citation that enterprise AI can depend on

Under the hood, this requires a multi-model data platform — one that can natively represent graphs, documents, and vectors together. Without that, context gets fragmented and stitched across separate systems.

By providing this unified layer, AI systems stop guessing. They understand what things mean, how they relate, when they were true, and where they came from — so reasoning stays consistent, actions are explainable, and outcomes improve as AI moves into production.

The contextual data foundation bridges fragmented enterprise data and LLMs, supplying the shared business context agents, co-pilots, and chatbots need to operate reliably.

Jakki: Is this something every AI team needs?

Ravi: No. If you’re working on a single, static use case with one data type, simpler tools are often enough.

This trusted data foundation becomes critical when relationships are dynamic and multiple agents or teams need the same up-to-date view of the business to operate reliably.

Jakki: Why can’t teams just stitch these capabilities together across existing systems?

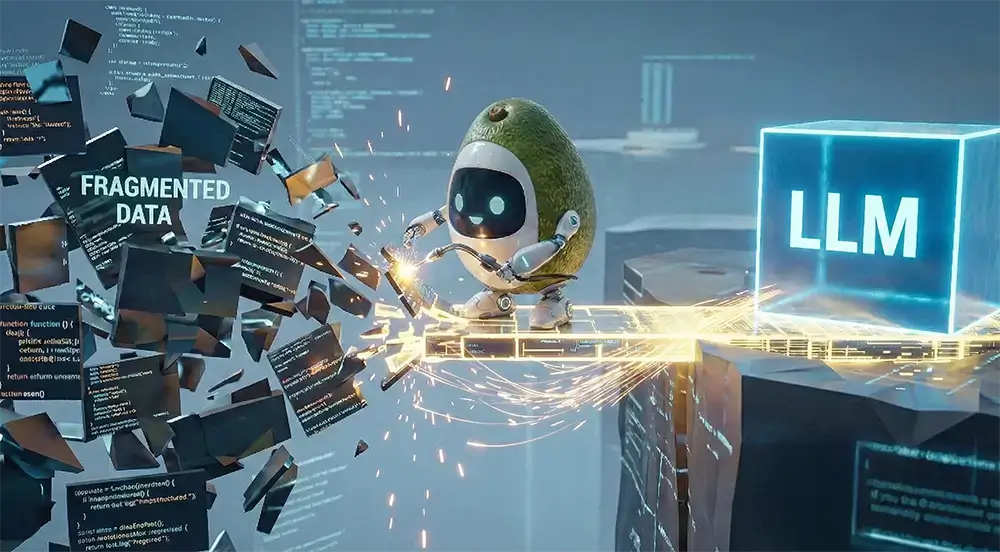

Ravi: Because at scale, every new system or use case multiplies the amount of business context that has to be reconstructed.

When meaning, relationships, time, and trust are spread across different systems, every co-pilot or agent has to reconstruct its own view of context on the fly. As data changes and use cases multiply, those reconstructions drift, leading to inconsistent answers, outdated information, and logic that’s increasingly hard to maintain.

Stitching this together can work for demos, but it fails in production. The only way to meet these requirements reliably is to manage business context as one—so every AI system operates on the same version of reality as data and use cases scale.

Why This Becomes Critical at Scale

A contextual data layer matters most when AI moves into production.

As more agents, workflows, and data sources come online, rebuilding business context for each use case leads to drift, inconsistency, and brittle systems.

Go Deeper

Multimodel Data Platforms: The Next Evolution Beyond Vector & Graph Silos

See how multimodel platforms make it possible to manage business context as a shared layer—without Frankenstacks.

Enterprise AI doesn’t fail because models are weak. It fails when business context isn’t unified, current, and trusted.

— Ravi Marwaha, CPTO, Arango

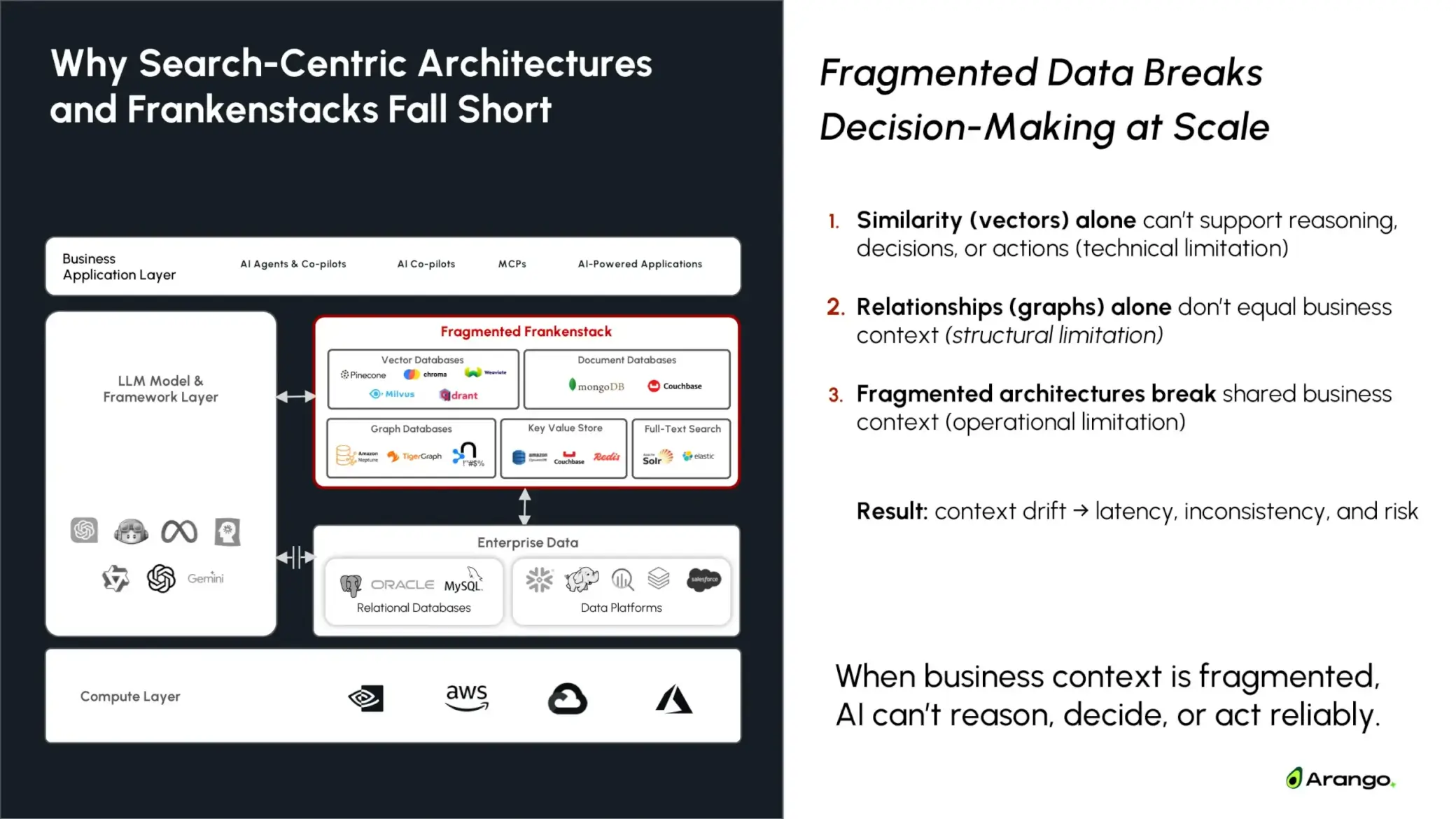

Jakki: Why doesn’t stitching together best-of-breed tools work?

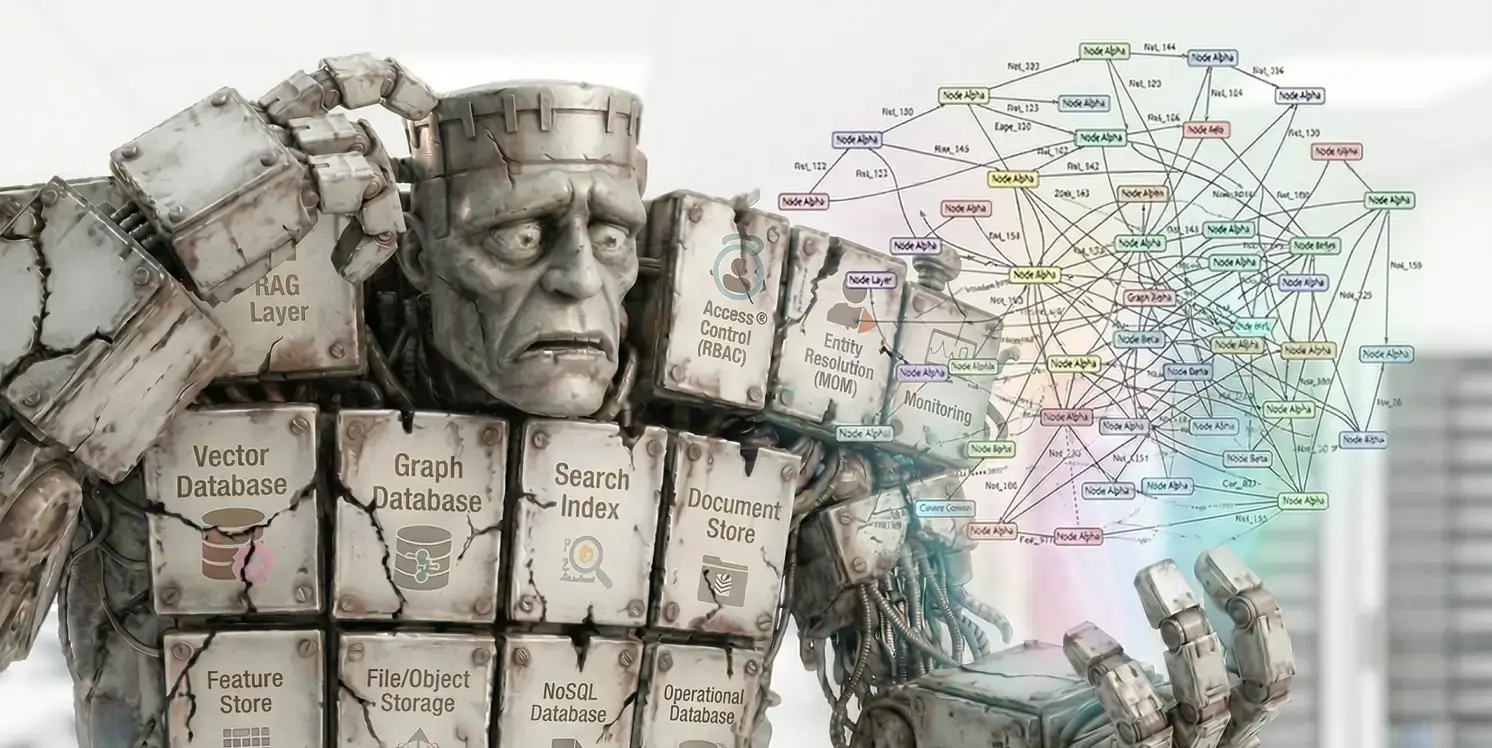

Ravi: Because it turns into a Frankenstack.

Teams end up gluing together a vector database, a graph database, metadata layers, access controls, caches, prompt logic, and application code to reconstruct context on the fly. Each co-pilot or agent assembles its own partial version of reality, which leads to inconsistent answers, outdated information, and brittle systems that are impossible to govern. The real cost is that every new co-pilot becomes a new integration project, slowing innovation and increasing operational risk.

Search-centric architectures and Frankenstacks fragment business context, preventing AI systems from reasoning, deciding, and acting at scale.

Jakki: What does this actually change for organizations trying to run AI in production and deliver measurable business outcomes?

Ravi: Enterprise AI ultimately reflects the data strategy beneath it. When business context is fragmented, AI outcomes fragment with it.

When context is unified, current, and trusted, results compound. That shows up as faster resolution, safer decisions, higher trust, and lower operational overhead.

Without that data foundation, teams build brittle, one-off co-pilots– good at retrieval, but fragile when accuracy, explainability, or action matter.

A purpose-built data platform changes the trajectory by giving every AI system the same shared view of the business—so innovation compounds instead of resetting with every new use case.

Enterprise AI Production Reality

As AI systems scale, fragmented data architectures introduce latency, increase the cost of change, and make it difficult to keep context consistent across applications and agents. That’s why many AI initiatives stall—not because models fail, but because the data foundation can’t keep up.

What’s missing is a contextual data layer.

Learn why unified, current, and trusted business context is the foundation enterprise AI needs to reach production.

A unified contextual data layer eliminates pipeline sprawl and context reconstruction while improving consistency across AI decisions and actions.

What’s next?

The Definitive Guide to Agentic AI-Ready Data Architecture

Now that you understand what a contextual data layer is, this guide helps you evaluate how to design for it—covering architecture patterns, trade-offs, and decisions that determine whether AI compounds value or debt.

Forrester on Multimodel Data Platforms

Looking for independent validation? Explore how analysts view multimodel data platforms as a foundation for managing business context across graph, vector, and operational data.

Multimodel Data Platforms: The Next Evolution Beyond Vector & Graph Silos

Go deeper into how the contextual data layer is implemented—and why multimodel platforms are emerging as the practical path forward.